With the tremendous increase of video recording devices and the resulting abundance of digital video, finding a particular video sequence in ever-growing collections is a major challenge. Existing, currently deployed approaches to retrieve videos still mostly rely on metadata-based retrieval techniques to find desired sequences. With vitrivr, we present a fully working system for indexing and retrieving multimedia data based on its content.

Awards

- Winner Video Browser Showdown 2021

- Winner Best Demo Award at the International Conference on Multimedia Retrieval 2019

- Winner Lifelog Search Challenge 2019

- Winner Video Browser Showdown 2019

- Winner Video Browser Showdown 2017 (iMotion)

- Winner Best Demo Award at the International Conference on Multimedia

- Fritz Kutter Award for Industry Related Thesis in Computer Science

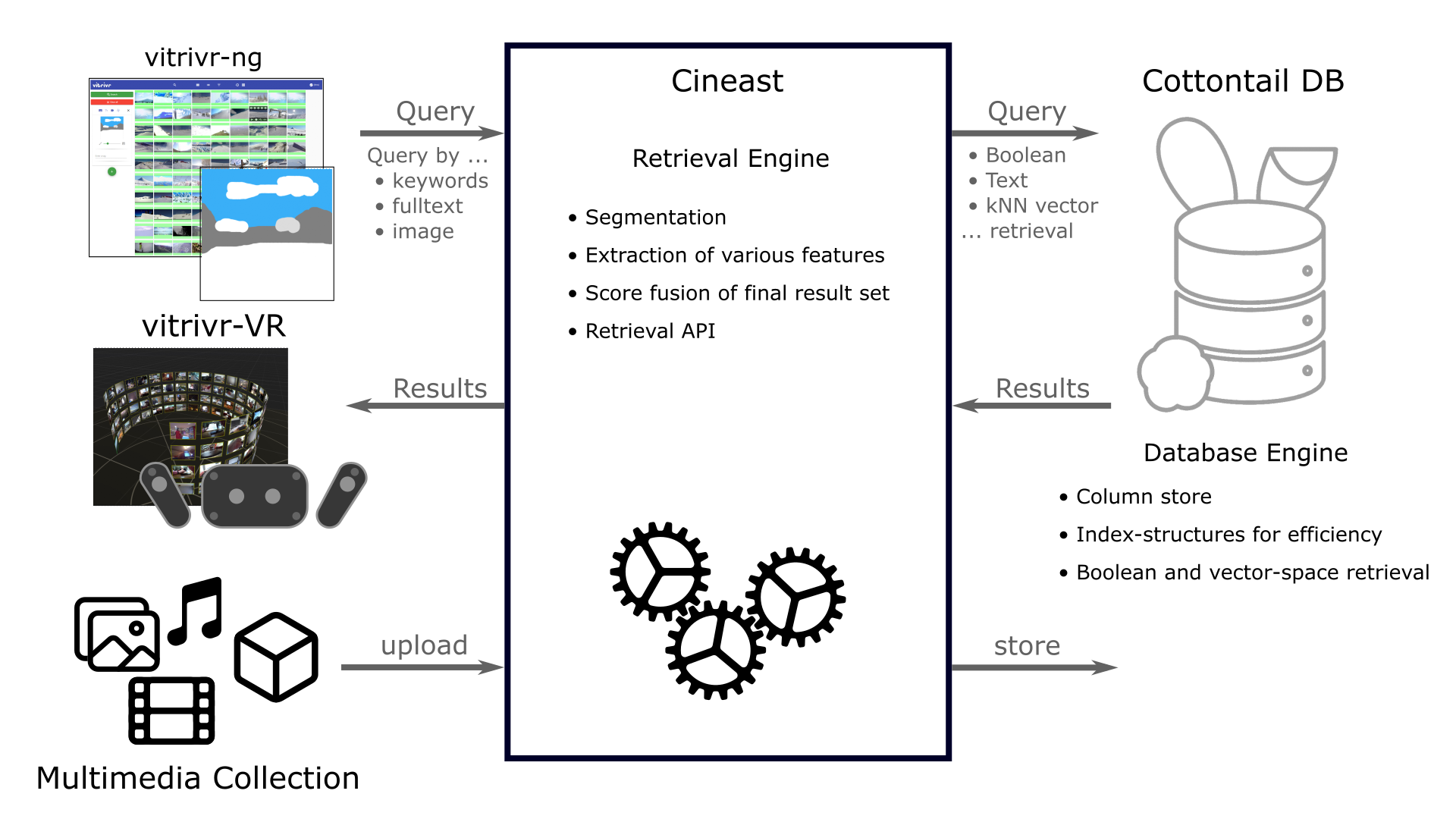

Architecture

The following image shows the overall architecture of vitrivr. vitrivr has three components: a user interface (vitrivr-ng and vitrivr-VR), a retrieval engine (Cineast) and a custom database (Cottontail DB). Cineast is our retrieval engine responsible for performing shot segmentation, extracting features from multimedia data and generating database queries based on user queries. These queries are then processed by Cottontail DB which is able to return the k nearest neighbors to a query in a very efficient way. Cineast then combines the results from various queries to one result set.

Components

Cineast, Cottontail DB, vitrivr-ng and vitrivr-VR are open source and actively being worked on.

vitrivr-ng

vitrivr-ng

The browser-based vitrivr UI offers multiple query modes to facilitate retrieval.

Cineast

Cineast

Cineast is a multi-modal multimedia content retrieval engine. It is capable of retrieving multimedia data based on a diverse range of user input such as sketches, text, and 3D Models.

Cottontail DB

Cottontail DB

Cottontail DB is a database system to store and retrieve multimedia data. It provides Boolean retrieval and similarity search and makes use of various index structures for efficient retrieval.

vitrivr-VR

vitrivr-VR

The virtual reality-based vitrivr UI offers novel interaction modes in virtual space.

In The Media

chevron_rightFull List of Media Coverage

Google Summer of Code

vitrivr has participated in Google Summer of Code 2016, 2018, and 2021. If you want to contribute to vitrivr, you can use the project descriptions remaining from previous GSoC participations as inspiration.

Contact Us

The best way to contact us is on GitHub through issues, pull requests, and discussions on the different repositories.